Interesting.

Though there are misconceptions, like the andriod/aosp xmpp clients being pay-to-download model. They are on the official google playstore (google play), but they are FLOSS apps, and you can find them at no cost (of course one can contribute the developers or f-droid devs as well):

https://f-droid.org/en/packages/eu.siacs.conversations

https://f-droid.org/en/packages/de.monocles.chat

https://f-droid.org/en/packages/com.cheogram.android

https://f-droid.org/en/packages/org.snikket.android/

And some other ones. No matter the country, people would do themselves a favor if they start using f-droid, where there’s an infrastructure in place and build artifacts trying to detect and get rid of non FLOSS component, which in general are not good privacy wise.

I think I don’t get the snikket route, you don’t get both a client and a server at the same time, do you? Also, if you self-host your own server that’ll be better for you, snikket or not, I don’t see how that is related particularly with the snikket route.

And actually I wouldn’t recommend quicksy, why providing your phone number, and why looking for phone numbers xmpp addresses, I don’t thing that plays nice with privacy.

Where did you get this idea?

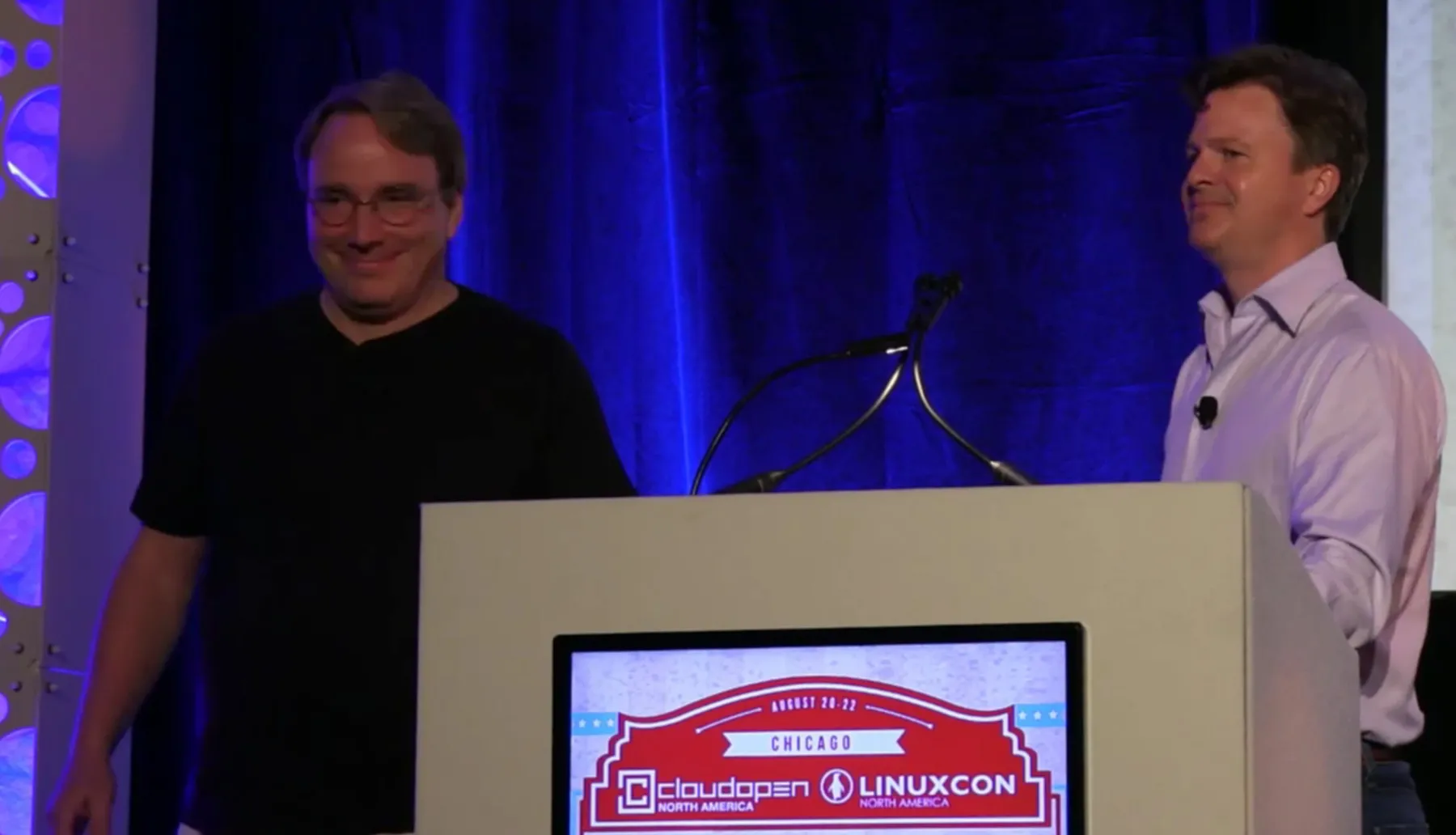

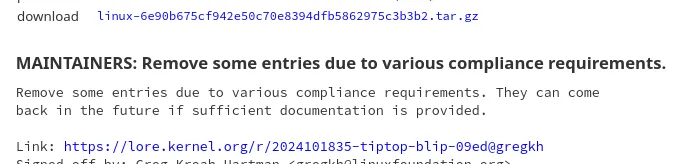

I think you are confusing FSF with the Linux Foundation, and you can see MS as part of the platinum LF members. Was that it, or you really meant FSF?