having contributors sign a CLA is always very sus and I think this is indicative of the project owners having some plans of monetizing it even though it is currently under AGPLv3. Their core values of no dark patterns and whatnot seem like a sales argument rather than an actual motivation/principle, especially when you see that they are a bootstrapped startup.

Thanks for pointing that out—looks like they’re working on a Server Suite. I’d guess that they try to monetize that but leave the personal desktop version free

I mean anybody can fork it and keep developing it without a CLA under AGPL3.

Yeah it’s easy to fall into a negativity bias instead of doing a risk benefit analysis , the company could be investing money and resources that could be missing from open source projects, especially professional work by non programmers (e.g. UX researchers) which is something that open source projects usually miss.

You could probably figure it out by going over the contributions.

Of course, I am not against software being open-source, and I much prefer this approach of companies making their software open-source, but it’s the CLA that really bothers me. I like companies contributing to the FOSS ecosystem, what I don’t like is companies trying to benefit from free contributions and companies having the possibility to change the license of the code from those contributors

I’m starting to come around to big corps running their custom enhanced versions while feeding their open source counterparts with the last gen weights. As much as I love open source, people need to eat.

As was mentioned, if they start doing something egregious, they’re not the only game in town, and can also be forked. Love it or hate it, a big corp sponsor makes Joe six-pack feel a little more secure in using a product.

Free as in freedom, not free as in beer.

GPLv3 allows you to sell your work for money, but you still have to hand over the code your customers purchased. You buy our product, you own it, as is. Do whatever you like with it, but if you sell a derivative, you better cough up the new code to whoever bought it.

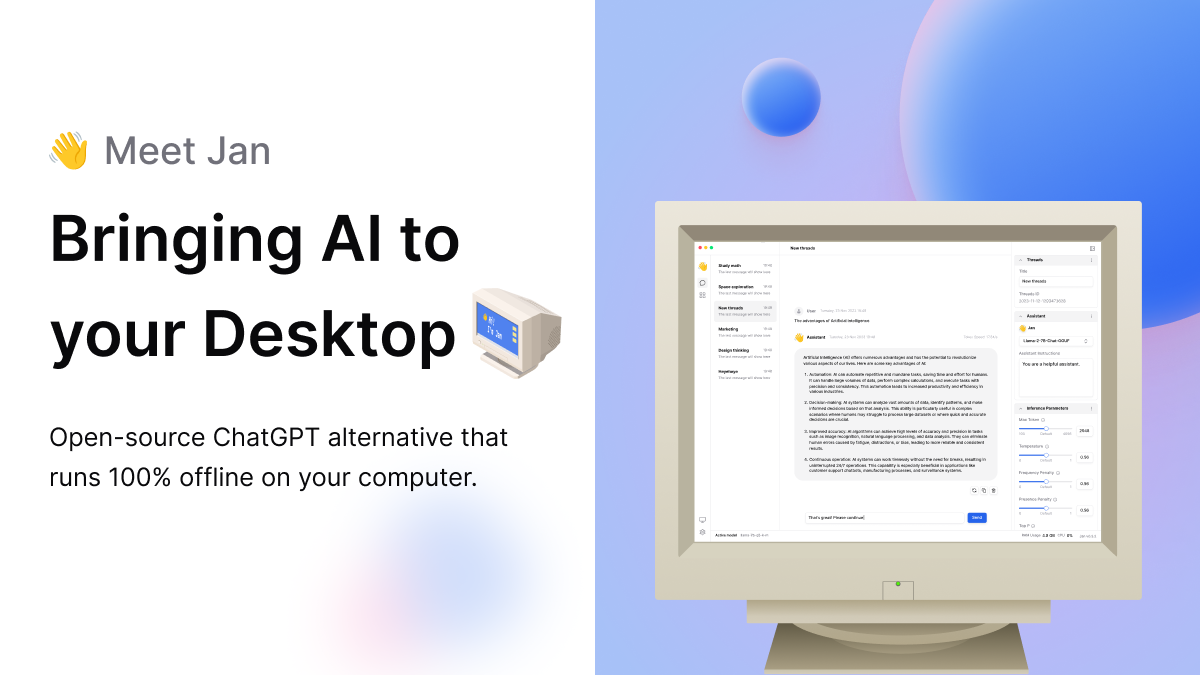

that runs 100% offline on your computer.

Goddamn, that’s wonderful!

Does this differ from Ollama + Open WebUI in any way?

Depends. Are either of those companies bootstrapping a for-profit startup and trying to dupe people into contributing free labor prior to their inevitable rug pull/switcheroo?

Do explain how you dupe people into contributing free labor and do a switcheroo with an open source project. All the app does is just provide a nice UI for running models.

I think they meant to imply that the original post (not yours) had suspicious intentions, while the ones you cited were more trustworthy

This is a desktop application, not something you need to host.

Ok I tried it out and as of now Jan has a better UI/UX imo (easier to install and use), but Open WebUI seems to have more features like document/image processing.

“100% Open Source“

[links to two proprietary services]

Why are so many projects like this?

I imagine it’s because a lot of people don’t have the hardware that can run models locally. I do wish they didn’t bake those in though.

Other offline tools I’ve found:

GPT4All

RWKY-Runner

any feelings on what you like best / works best?

They all work well enough on my weak machine with an RX580.

Buuuuuuuuuut, RWKY had some kind of optimization thing going that makes it two or three times faster to generate output. The problem is that you have to be more aware of the order of your input. It has a hard time going backwards to a previous sentence, for example.

So you’d want to say things like “In the next sentence, identify the subject.” and not “Identify the subject in the previous text.”

Anyone interested in a local llm should check out Llamafile from Mozilla.

I’ve been using Jan for a while now. It’s great!

Would you say it’s noob-friendly?

Yes

It’s extremely noob friendly. You really don’t need any prior knowledge to start using this.

Very. Just have a good enough internet connection and hardware to download and run models. Interrupted downloads must start over. 4-41 GB. Otherwise find the source, use wget, and download to the correct folder.

Is there a model you prefer? I’ve been throwing the exact same question to different models and they seem to all give a very similar answer.

Also, how is it getting certain information if it’s all offline? For example, I asked it to recommend some bike products, and gave very specific brands and models.

Train it online. Use it offline.

That’s crazy impressive, though. I’ve been playing with it more, and it’s very specific about certain things. I guess you can hold a lot of data in the GB of space these models use.

Agree, no small feat. Two caveats tho:

- These models prioritize plausibility above factual correctness. So verification often is needed.

- Data from after the creation of trainingmaterial is absent of course.

These models prioritize plausibility above factual correctness. So verification often is needed.

100% I was telling my wife that anyone who knows about a subject, can easily point out the inaccuracies with the output from any of the models.

But if you don’t know about a subject, the AI gives you an answer that seems like it could be right. Scary to see where this technology takes us, especially when the majority easily digests information without verifying any of it.

Trinity stood out the most to me, it seems to have less unnecessary fluff

I use Stealth or Starling, usually.

Is it better than GPT4All? Do they provide their own model(s) or do we have to download it from other sources?

The provide a hub of models, in my case it was better than gpt4all because it didn’t crash, but I also think it has a nicer user interface.

I’m in the process of installing https://github.com/imartinez/privateGPT will check this one out afterwards.

The biggest difference seems to be that you can let privateGPT to let analyze your own files. Didn’t see that functionality in Jan.

One difference is that Jan is increadibly easy to install, just download the AppImage, make it executable and start it.

@jeena And absolutely nothing can go wrong by downloading random files from the internet based on contemporary hype, making them executable and starting them…

As opposed to cloning a random repository and running

makeor something?@xigoi Is that something you do?

How else would you install something that doesn’t happen to be in your favorite package manager?

@xigoi Are you actually trying to get malware into your computer? Don’t install **random** shiny new things without maximum skepticism. Period. Just let some other fools “test” the minefield for you. Or do a proper inspection. Executing foreign code just because it had “GPT” in the name… and acting like there was no other option… yuck!

This looks very cool, especially the part about being able to use it on consumer-grade laptops. Will try it out when I get a chance.

So what exactly is this? Open-source ChatGPT-alternatives have existed before and alongside ChatGPT the entire time, in the form of downloading oogabooga or a different interface and downloading an open source model from Huggingface. They aren’t competitive because users don’t have terabytes of VRAM or AI accelerators.

Edit: spelling. The Facebook LLM is pretty decent and has a huge amount of tokens. You can install it locally and feed your own data into the model so it will become tailor made.

I think you mean tailor. As in, clothes fitted to you.

deleted by creator

Lol. My mistake.

Good boy Taylor.

Exactly, my auto carrot likes Taylor.

It’s basically a UI for downloading and running models. You don’t need terabytes of VRAM to run most models though. A decent GPU and 16 gigs of RAM or so works fine.

What are the hardware requirements?

Depends on the size of the model you want to run. Generally, having a decent GPU and at least 16 gigs of RAM is helpful.