No one here is mentioning that Crysis released right when single core processors were maxing out their clock speed while dual and quad core processors were basically brand new. It wasn’t obvious to software developers that we wouldn’t have 12GHz processors in a couple years because the entire industry would just keep adding more and more cores rather than sizing up each core.

So a big bottleneck for Crysis was that it would max out single core performance on every PC for years because single core clock speed didn’t improve very much after that point.

The joke is i don’t think any “future pcs” can run crysis now since it’s not compatible with new windows versions iirc. I got a cobwebbed copy i can’t play in my steam library rn

It works on Linux on Proton, at least.

Unfortunately, they miscalculated and thought that single threaded performance (specifically higher clock speeds) would continue to advance at the same pace as it has been before the release of Crysis, but what ended up happening instead is more focus on multithreading by releasing CPUs with more cores with smaller IPC improvements comparitively.

IPC improvements were obviously still a thing, but it took a while for hardware to be able to run Crysis maxed out at good framerates.

It also wasn’t exactly the best optimized game either. Call of duty 4 came out the same year and looked spectacular without crushing your computer.

Still looking great at FHD resolution.

If you do that today gamers will cry about it being “unoptimized”

The weird thing is that it had low quality settings to make less powerful pcs be able to run it.

I finished the whole game on a core 2 duo, and some nvidia gpu from the same era I don’t even remember.

And you could fine tune basically any setting.

I wish they hyped that as cool too, I never even tried to run it lol

So poorly optimised you need future technology to run it isn’t the future proofing strategy I’d go with, but ok…

So, I’ve seen this phenomenon discussed before, though I don’t think it was from the Crysis guys. They’ve got a legit point, and I don’t think that this article does a very clear job of describing the problem.

Basically, the problem is this: as a developer, you want to make your game able to take advantage of computing advances over the next N years other than just running faster. Okay, that’s legit, right? You want people to be able to jack up the draw distance, use higher-res textures further out, whatever. You’re trying to make life good for the players. You know what the game can do on current hardware, but you don’t want to restrict players to just that, so you let the sliders enable those draw distances or shadow resolutions that current hardware can’t reasonably handle.

The problem is that the UI doesn’t typically indicate this in very helpful ways. What happens is that a lot of players who have just gotten themselves a fancy gaming machine, immediately upon getting a game, go to the settings, and turn them all up to maximum so that they can take advantage of their new hardware. If the game doesn’t run smoothly at those settings, then they complain that the game is badly-written. “I got a top of the line Geforce RTX 4090, and it still can’t run Game X at a reasonable framerate. Don’t the developers know how to do game development?”

To some extent, developers have tried to deal with this by using terms that sound unreasonable, like “Extreme” or “Insane” instead of “High” to help to hint to players that they shouldn’t be expecting to just go run at those settings on current hardware. I am not sure that they have succeeded.

I think that this is really a UI problem. That is, the idea should be to clearly communicate to the user that some settings are really intended for future computers. Maybe “Future computers”, or “Try this in the year 2028” or something. I suppose that games could just hide some settings and push an update down the line that unlocks them, though I think that that’s a little obnoxious and would rather not have that happen on games that I buy – and if a game company goes under, they might never get around to being unlocked. Maybe if games consistently had some kind of really reliable auto-profiling mechanism that could go run various “stress test” scenes with a variety of settings to find reasonable settings for given hardware, players wouldn’t head straight for all-maximum settings. That requires that pretty much all games do a good job of implementing that, or I expect that players won’t trust the feature to take advantage of their hardware. And if mods enter the picture, then it’s hard for developers to create a reliable stress-test scene to render, since they don’t know what mods will do.

Console games tend to solve the problem by just taking the controls out of the player’s hands. The developers decide where the quality controls are, since players have – mostly – one set of hardware, and then you don’t get to touch them. The issue is really on the PC, where the question is “should the player be permitted to push the levers past what current hardware can reasonably do?”

This is why I really respect when a game has very clear separations on the slider indicating that one end is very intensive, bonus points if the game warns me that it might but run well at maxed settings.

Yeah, I agree that the “this particular setting is performance-intensive” thing is helpful. But one issue that developers hit is that when future hardware enters the picture, it’s really hard to know what exactly the impact is going to be, because you have to also kind of predict where hardware development is going to go, and you can get that pretty wrong easily.

Like, one thing that’s common to do with performance-critical software like games is to profile cache use, right? Like, you try and figure out where the game is generating cache misses, and then work with chunks of data that keep the working set small enough that you can stay in cache where possible.

I’ve got one of those X3D Ryzen processors where they jacked on-die cache way, way up, to 128MB. I think I remember reading that AMD decided that on the net, the clock tradeoff entailed by that wasn’t worth it, and was intending to cut the cache size on the next generation. So a particular task that blows out the cache above a certain data set size – when you move that slider up – might have horrendous performance impact on one processor and little impact on another with a huge cache…and I’m not sure that a developer would have been able to reasonably predict that cache sizes would rise so much and then maybe drop.

I remember – this is a long time ago now – when one thing that video card vendors did was to disable antialiased line rendering acceleration on “gaming” cards. Most people using 3D cards to do 3D modeling really wanted antialiased lines, because they spent a lot of time looking at wireframes, and wanted them to look nice. They were using the hardware for real work, were less-price sensitive. Video card vendors decided to try and differentiate the product so that they could use price discrimination. Okay, so imagine that you’re a game developer and you say that antialiased lines – which I think most developers would just assume would become faster and faster – don’t have a large performance impact…and then the hardware vendors start disabling the feature on gaming cards, so suddenly cards are maybe slower rendering than earlier cards. Now your guidance is wrong.

Another example: Right now, there are a lot of people who are a lot less price sensitive than most gamers wanting to use cards for parallel compute to run neural nets for AI. What those people care a lot about is having a lot of on-card memory, because that increases the model size that they can run, which can hugely improve the model’s capabilities. I would guess that we may see video card vendors try to repeat the same sort of product differentiation, assuming that they can manage to collude to do so, so that they can charge people who want to run those neural nets more money. They might tamp down on how much VRAM they stick on new GPUs aimed at gaming, so that it’s not possible to use cheap hardware to compete with their expensive compute cards. If you’re a vendor and thinking that blowing, say, 2x to 3x the VRAM current hardware has N years down the line is reasonable for your game, that…might not be a realistic assumption.

I don’t think that antialiasing mechanisms are transparent to developers – I’ve never written code that uses hardware antialiasing myself, so I could be wrong – but let’s imagine that it is for the sake of discussion. Early antialiasing ran by using what’s today called FSAA. That’s simple and for most things – aside from pinpoint bright spots – very good quality, but gets expensive quickly. Let’s say that there was just some API call in OpenGL that let you get a list of available antialiasing options (“2xFSAA”, “4xFSAA”, etc). Exposing that to the user and saying “this is expensive” would have been very reasonable for a developer – FSAA was very expensive if you were bounded on nearly any kind of graphics rendering, since it did quadratically-increasing amounts of what the GPU was already doing. But then subsequent antialiasing mechanisms were a lot cheaper. In 2000, I didn’t think of future antialiasing algorithm improvements – I just thought of antialiasing entailing rendering something at high resolution, then scaling it down, doing FSAA. I’d guess that many developers wouldn’t either.

I feel like instead of the “settings have been optimized for your hardware” pop up that almost always sets them to something that doesn’t account for the trade-off between looks and framerate that a player wants, there should be a “these settings are designed for future hardware and may not work well today” pop up when a player sets everything to max.

I’ve noticed some games also don’t actually max things out when you select the highest preset.

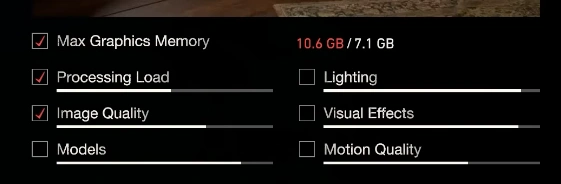

I also really like the settings menu of the RE engine games. It has indicators that aggregate how much “load” you’re putting on your system by turning each setting up or down, which lets you make more informed decisions on what settings to enable or disable. And it in fact will straight up tell you when you turn stuff too high, and warns you that things might not run well if you do.

Even if it can’t tell how much load you put on your system because that is a complex interaction of various bottlenecks, it would at least be nice if they labelled which settings are likely to contribute to the CPU, CPU, RAM, VRAM,… bottlenecks.

Obviously.

There are a total of seven indicators, the only one that is labeled with numbers is the one that estimates how much VRAM will be used.

The rest are just unlabeled bars for “Processing Load” and visual effects categories. They don’t ACTUALLY have anything to do with how much your system is able to do, they just indicate what a setting does in relation to themselves. (checkmarks show which bars the currently selected setting affects)

doesn’t account for the trade-off between looks and framerate that a player wants,

Yeah, I thought about talking about that in my comment too. Like, maybe a good route would be to have something like a target minimum FPS slider or something. That – theoretically, if implemented well – could provide a way to do reasonable settings on a limitred per-player basis without a lot of time investment by the player and without smacking into the “player expects maximum settings to work” issue.

There are also a few people who want the ability to ram quality way up and do not care at all about frame rate for certain things like screenshots, which complicates matters.

I think that one of the big problems is that if any games out there do a “bad” job of choosing settings, which I have seen many games do, it kills player trust in the “auto callibration” feature. So the developers of Game A are impacted by what the developers of Game B do. And there’s no real way that they can solve that problem.

Maybe if games consistently had some kind of really reliable auto-profiling mechanism that could go run various “stress test” scenes with a variety of settings to find reasonable settings for given hardware

…like most games from early 10’s? A lot of them had built-in benchmark that tested what your PC is capable of and then set things up for you.

Yeah, there are auto-calibration systems, but that’s why I’m emphasizing “reliably”. I’ve had some of them, for whatever reason, not ramp up quality settings on hardware a decade later even though it can run it smoothly, which is irritating. In fairness to the developers, they can’t test on future hardware, but I also don’t understand why that happens. Maybe there’s some degree of hard-coded assumptions that fall down for some reason down the line.

As much as I find distasteful the idea of shipping “mandatory” patches for single player games years down the line to fix issues that should’ve been caught during QA… this might be a decent use case for them

I don’t like the idea of it needing to be patched in.

At launch advanced graphics mode settings could be something that is disabled by default but unlockable (via config.ini setting, console command, cheat code, whatever). Really the implementation isn’t what’s important, just that it is opt-in and the user knows that are leaving the normal settings and entering something that may not work as expected.

Then if they are still supporting the game later they can change the defaults with a patch but if the devs don’t have that opportunity the community can still document this behaviour on sites like www.pcgamingwiki.com.

That is not at all how it works or what they are saying. For the time, it was not poorly optimised. Even at low settings it was one of the best looking games released, and pushed a lot of modern tech we take for granted today in games.

Being designed to scale, does not mean its badly optimised.

It was not poorly optimized, it was things like draw distance and texture resolution.