It is kind of interesting how open machine learning already is without much explicit advocacy for it.

It’s the only field I can think of where the open version is just a few months behind SOTA in all of IT.

Open training pipelines and open data are the only aspects that could still use improvements in ML, but there are plenty of projects that are near-SOTA and fully open.

ML is extremely open compared to consumer mobile or desktop apps that are always ~10 years behind SOTA

I feel like it’s really far from being open. Besides the training data not being open, the more popular ones like llama and stable diffusion have these weird source available licenses with anti-competitive clauses, user count limits, or arbitrary morality clauses.

Yeah there has also been an increase in the amount of companies either making FLOSS work more closed off or just not caring about them if it benefits their bottom line.

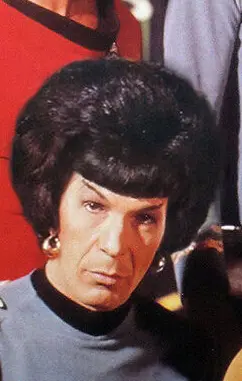

Unrelated I like your new profile pic.

Almost all of Qwen 2.5 is Apache 2.0, SOTA for the size, and frankly obsoletes many bigger API models.

Ironically thanks in no small part to Facebook releasing Llama and kind of salting the earth for similar companies trying to create proprietary equivalents.

Nowadays you either have gigantic LLMs with hundreds of billions of parameters like Claude and ChatGPT or you have open Models that are sub-200B.

I personally think the really large models are useless. What is very impressive is the small ones that somehow manage to be good. It blows my mind that so much information can fit in 8b.

True that, llms could be the future of lossy compression

Llama 3.1 405b has entered the chat

Waiting till mixtral gonna optimise it enough to run on home computer, and then till dolphin uncensor it

Waiting for a 8x1B MoE