I never understood how they were useful in the first place. But that’s kind of beside the point. I assume this is referencing AI, but due to the fact that you’ve only posted one photo out of apparently four, I don’t really have any idea what you’re posting about.

The point of verification photos is to ensure that nsfw subreddits are only posting with consent. Many posts were just random nudes someone found, in which the subject was not ok with having them posted.

The verification photos show an intention to upload to the sub. A former partner wanting to upload revenge porn would not have access to a verification photo. They often require the paper be crumpled to make it infeasible to photoshop.

If an AI can generate a photorealistic verification picture, it cannot be used to verify anything.

I didn’t realize they originated with verifying nsfw content. I’d only ever seen them in otherwise text-based contexts. It seemed to me the person in the photo didn’t necessarily represent the account owner just because they were holding up a piece of paper showing the username. But if you’re matching the verification against other photos, that makes more sense.

It’s been used way before the nsfw stuff and the advent of AI.

Back in the days if you were doing an AMA with a celeb, the picture proof is the celeb telling us this is the account they are using. Doesn’t need to be their account and was only useful for people with an identifiable face. If you were doing an AMA because you were some specialist or professional, giving your face and username doesn’t do anything, you need to provide paperwork to the mods.

This is a poor way to police fake nudes though, I wouldn’t have trusted it even before AI.

It used to be tits or GTFO ON /b.

From now on I’ll have amazing tits.

Was it really that hard to Photoshop enough to bypass mods that are not experts at photo forensic?

Probably not, but it would still reduce the amount considerably.

It’s mostly about filtering the low-hanging fruit, aka the low effort trolls, repost bots, and random idiots posting revenge porn.

As in most things. I don’t have security cameras to capture video of someone breaking in. I have them so my neighbours house looks like an easier target.

I think it takes a considerable amount of work to photoshop something written on a sheet of paper that has been crumpled up and flattened back out.

If you have experience with the program it’s piss easy

However most people do not have experience.

You also have to include the actual person holding something that can be substituted for the paper.

Sort of. You just need the vague correct position of the elbow/shoulder and facing the camera. You can get away with photoshopping different arms and most people wouldn’t notice if you do it correctly.

So you need a guy with such experience on your social engineering team.

Removed by mod

there’s a lot of tools to verify if something was photoshopped or not… you don’t need to be an expert to use them

I tried some one day and I didn’t find any that is actually easy for a noob, I remember having to check resolution, contrast, spatial frequency disruption etc. and nothing looked easy to detect without proper training.

i wouldn’t just go around telling people that…

Can you share more? Never had to use one.

You can verify the resolution changes across a video or photo. This can be overcome by setting a dpi limit to your lowest resolution item in the picture, but most people go with what looks best instead of a flat level.

I was going to suggest using an artifact overlay to suggest all the images were shot by the same lens on the same camera

On a side note, they are also used all the time for online selling and trading, as a means to verify that the seller is a real person who is in fact in possession of the object they wish to sell.

How does traditional - as in before AI - photo verification knows the image was not manipulated? In this post the paper is super flat, and I’ve seen many others.

From reading the verification rules from /r/gonewild they require the same paper card to be photographed from different angles while being bent slightly.

Photoshopping a card convincingly may be easy. Photoshopping a bent card held at different angles that reads as the same in every image is much more difficult.

That last thing will still be difficult with AI. You can generate one image that looks convincing, but generating multiple images that are consistent? I doubt it.

The paper is real. The person behind it is fake.

Curious how long it’ll be until we start getting AI 3D models of this quality.

I feel like you could do this right now by hand (if you have experience with 3d modelling) once you’ve generated an image. 3d modelling often includes creating a model from references, be they drawn or photographs.

Plus, I just remembered that creating 3d models of everyday objects/people via photos from multiple angles has been a thing for a long time. You can make a setup that uses just your phone and some software to make 3d printable models of real objects. No reason preventing someone from using a series of AI generated images instead of photos they took, so long as you can generate a consistent enough series to get a base model you can do some touch-up by hand to fix anything that the software might’ve messed up. I remember a famous lady in the 3d printing space who I think used this sort of process to make a complete 3d model of her (naked) body, and then sold copies of it on her Patreon or something.

I found this singular screenshot floating around elsewhere, but yes r/stablediffusion is for AI images.

I had some trouble figuring out what exactly was going on as well, but the Stable Diffusion subreddit gave away that it was at least AI related, as that’s one of the popular AI programs. It wasn’t until I saw the tag though, that I really understood - Workflow Included. Meaning that the person included the steps they used to create the photo in question. Which means that the person in the photo was created using the AI program and is fake.

The implications of this sort of stuff are massive too. How long until people are using AI to generate incriminating evidence to get people arrested on false charges, or the opposite - creating false evidence to get away with murder.

Pretty sure it started because nsfw subreddit mods realized they demand naked pictures of women that nobody else had access to and it made their little mod become a big mod.

Verification posts go back further than Reddit.

They were used extensively on 4chan, because they were the only way to prove that a person posting was in fact that person.and yes, it was mostly people posting nudes, but it was more that they wanted credit.

The reason it carried on to Reddit was because people were using the accounts to advertise patreon and onlyfans, and mods mostly wanted the people making money off the pictures to be the people who took those pictures.

Also it was useful for AMA posts and other such where a celebrity was involved.

4chan was a bit different in that it was anonymous to begin with- and more to the point, it was self-volunteered verification, not a mod-driven requirement.

As for reddit, mods were requiring private verification photos LONG before patreon and onlyfans even existed in the first place.

AMAs, agreed.

“No no it’s not about consent it’s about someone being horny” is such a bad take… and bad taste.

I hate to break this to you, but there was in fact subreddits that publically stated that they required you to privately DM mods a full-body full-face nudes in poses of the mod’s choice for verification.

That ain’t me being in bad taste, it’s just me doing basic observation. Some subreddits it was about verification, yes. Some it was about consent. Some of them it was about the mods being horny. And most of them, it was some combination of the three.

To pretend that it didn’t happen is… well, casual erasure of sexual misconduct of the mods, frankly.

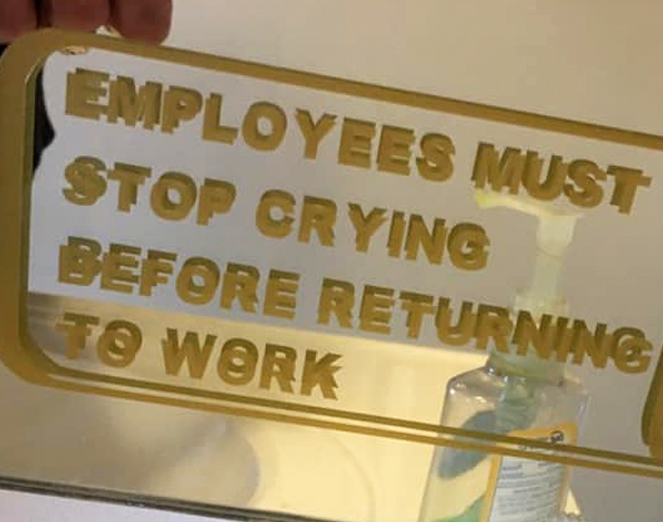

Look at this verification

Ha perfect!

Every night it even makes me legitimize

Is that Chad Kroger from Nickelback?

Is that Joey with something on his head?

How did his eyes get so red?

At some point the only way to verify someone will be to do what the Klingons did to rule out changelings: Cut them and see if they bleed.

Don’t worry, companies like 23andMe and Ancestry have been banking DNA records, so mimicking blood won’t be too hard, either.

The Thing migrated to Star Trek?

I think that stopped working as of Picard season 3.

Wait really? Haven’t seen Picard, what happened?

Two seasons of stupid garbage followed by Season 3 which just ignored the garbage and made things right.

Well, changelings bleed for example

So they’ve gotten better at mimicking other life forms? Is that the canonical reason in the show or is Picard just going against established lore?

I only watched a little of Picard before I gave up. But if I had to guess, it’s that the writers never watched any Star Trek, so they get a lot wrong.

Yeah but isn’t it just the changelings that Starfleet did all the fucked up experiments on that bleed?

Ye, good thing I already have spychecking as a persistent habit!

Pyro has entered the chat.

I’m pretty sure we can just switch to a verification video chat which will buy us a year.

One year? I’m guessing six months, what a time to be alive!?!

two more papers down the line!

Nope, 1 month ago: https://humanaigc.github.io/animate-anyone/static/videos/demo11.mp4

I think ai can already do videos with people in them. Not without it looking completely natural though so there will be some discrepancies.

Can confirm, I made some random korean dude on dall-e to send to Instagram after it threatened to close my fake account, and it passed.

The eyes are in the exact same spot in every photo

Why would they require a photo? What the heck?

Social media companies must have to charge less if several people seeing ads are actually bots

Hahaha, those jerks!

deleted by creator

Removed by mod

Ooooh an actually interesting use of ai: preserving anonymity

Y’all just trying to recreate the idea of digital avatars in here

In the dark future, an underground market has formed to preserve the anonymity and privacy of the average person using holographic disguises of anthropomorphic figures that were in the distant past sometimes known as “furries.”

Ah yes, even in the dark future, furries are making super advanced and useful technologies to be more furry.

There are projects that already exist with this sort of purpose, one I came across a while ago was Deep Privacy which uses deepfakes to replace your face and body in an image with one that is AI generated.

This is making me think of A Scanner Darkly. Check out the movie if you haven’t .

Agreed but skip the movie and read the book. 1000x better.

That movie temporarily cured my insomnia.

I’ve had an AI generated mix between my face and an actors as my Facebook profile pic for a little over a year now I think, or close to it, and only my sister has called me out on it.

I mean I wouldn’t bring it up to most people with how much some people shop their shit before uploading.

Yeah I wouldn’t either. I still think it’s pretty funny though

I’m in the same boat. I basically want to wear an ai mask. I don’t like cartoon face trackers or similar. I don’t have the hardware to render a video though, and I’m not going to buy server time.

Bro there are people who post entire timelines of themselves in completely anime ai generated imagery

And they are livinf better than i

People use some Snapchat filters like this, like the anime face one I see a lot.

Google automatic1111, it’s the program to run if you want to generate AI images. You can put in the original photo, use the built in editor and request the face of a pretty man/woman/elephant (for all I care) and it’ll generate a face and merge it with the surrounding image perfectly.

Requires a graphics card with a few gigabytes of vram though, so there is a certain hardware requirement if you want to do this locally.

I really like “Bitmoji” on my iPhone as an interesting start in that direction. I can create my avatar, whether as similar to me or not, and use it as a filter on FaceTime where it follows a lot of my actual movement and expressions

What am I looking at here?

GenAI made image of a verification post. The point i guess is that with genAI photos, anyone can easily make a fake verification post, making them less useful as a means to verify identity.

The post originally is from reddit (https://www.reddit.com/r/StableDiffusion/s/fEle6uaiR7)

Thank thought what it was but wasn’t sure.

Very rapidly the basis of truth in any discussion is going to get eroded.

Micro communities based on pre (post-truth) connections. Only allowing people into the community that can be confirmed be others?

I’ve been thinking of starting a matrix community to get away from discord and it’s inevitable Botting.

Once again everyone on the internet is a cute girl if they want to be.

Or a cute cat.

Or Elvis.

It’s the return of 16/f/cali

And then there is me. I’m all of the above.

If this is what we get, fine. She’s hot.

That’s why you need a video with movement. AI still can’t do video right.

It’s getting close, now you can provide a picture of someone and an animated skeleton, and it outputs the person moving according to the reference.

Where do I get an animated skeleton?

Home Depot sells them around October

I mean, you can’t argue with the realism

It’s a seasonal product, you have to wait for October.

Here’s an example by Animate Anyone https://humanaigc.github.io/animate-anyone/static/videos/demo11.mp4

midjourney will start producing videos in 2024.

https://ymcinema.com/2024/01/04/midjourney-will-start-to-create-ai-videos/

This has been published a month ago https://humanaigc.github.io/animate-anyone/static/videos/demo11.mp4

It can https://youtu.be/8PCn5hLKNu4

It is however still cutting edge research

Until it can

An arms race is inevitable until someone invents a perfect automated Turing test.

Removed by mod

Never trust your eyes or ears again in this modern digital hellscape! https://youtube.com/shorts/55hr7Tx_7So?si=db5hROJWYjdQRMTD

In ‘Stranger In A Strange Land’ there’s an interesting profession; Fair Witnesses are sworn to provide a disinterested examination of any situation.

I’ve been thinking how much we need this for eight years now, and since the AI explosion it only seems more dire.

deleted by creator

Isn’t there a trick where you can ask someone to do a specific hand gesture to get photos verified. That’ll still work especially because AI makes fingers look wonky

AI has been able to do fingers for months now. It’s moving very rapidly so it’s hard to keep up. It doesn’t do them perfectly 100% of the time, but that doesn’t matter since you can just regenerate it until it gets it right.

You could probably just set up a time for the person to send a photo, and then give them a keyword to write on the paper, and they must send it within a very short time. Combine that with a weird gesture and it’s going to be hard to get a convincing AI replica. Add another layer of difficulty and require photos from multiple angles doing the same things.

Lornas can be supplied to the AI. These are data sets of specific ideas like certain hand gestures, lighting levels, whatever style you need you can fine-tune the general data set with lornas.

I have the minimum requirements to produce art and HQ output takes 2 minutes. Low-quality only takes seconds. I can fine-tune my art on a LQ level, then use the AI to upscale it back to HQ. This is me being desperate, too, using only local software and my own hardware.

Do this through a service or a gpu farm and you can spit it out much quicker. The services I’ve used are easy to figure out and do great work for free* in a lot of cases.

I think these suggestions will certainly be barriers and I can think of some more stop-gaps, but they won’t stop everyone from slipping through the cracks especially as passionate individuals hyper-focus on technology we think in passing continue working on it.

Simpler thing is to just have the user take a video. I’ve already seen that in practice.

With a shoe on their head and a sharpie up their ass.

A sharpie is a poor and dangerous anal simulator. It is too easy to be sucked in.

Never put things into your bum unless they have a flange

I think the real problem with this as anal simulation is it looks and feels nothing like an anus

I feel like there’s a way to get around that… Like if you really wanted, some sort of system to Photoshop the keyword onto the piece of paper. This would allow you to generate the image but also not have to worry ab the AI generating that.

Edit: also does anyone remember that one paper that had to do with a new AI architecture where you could put in some sort of negative image to additionally prompt an AI for a specific shape, output, or position.

Just write on paper and overlay via Photoshop. Photopea has a literal one button click function for that very easy to do. Just blank paper and picture with enough light. Very easy

And it’ll get better if loads of verification posts are doing hand signs

“Can you hold up 7 fingers in front of the camera?”

Photo with one hand up

Some AI models have already nailed the fingers, this won’t do anything. We need something that we can verify without having to trust the other person. I hate to say it but the block chain might be one of the best ways to authenticate users to avoid bots

Block chains have no inherent capability to perform user authentication.

Blockchains aren’t exactly the best at proof of personhood. Usually all they can do is make masquerading as multiple people (a Sybil Attack) more expensive.

That’s not to say interesting approaches haven’t come out of blockchain-adjacent work, like https://passport.gitcoin.co/.

How would the blockchain help?

Removed by mod

I can finally realise my dream of commenting on r/blackpeopletwitter

Yup. This is already a thing

I am totally not 𝖺 𝗋𝗈𝖻𝗈𝗍.

Thank goodness we can now use AI to do something that could already easily be done by taking a picture off someones social media.

I’m confused. How would that help? The whole point of a verification post is that the username in the image matches the username posting the image. If you’re just talking about Photoshop, then let’s be clear about that. Otherwise, taking photos off social media is no different than someone just Photoshopping any other verification image, even of themselves.