Search

Bigger instances will indeed run multiple copies of the various components, it’s pretty standard software in that regard.

Usually at first that will start by moving the PostgreSQL database to its own dedicated box, and then start adding additional backend boxes, possibly adding more caching in front so that the backend doesn’t have to do as much work. Once the database is pegged, the next step is usually a write primary and one or more read secondaries. When that gets too much, you get into sharding so that you can spread the database load across multiple servers. I don’t know much about PostgreSQL but I have to assume it’s better than MySQL in that regard and I’ve seen a 1 TB MySQL database in the wild running just fine.

I think lemmy.world in general is hitting some scalability issues that they’re working on. Keep in mind the software is fairly new and is just being truely tested at large scale, there’s probably a ton of room for optimization. Also lemmy.world is still on 0.17 and apparently 0.18 changed the protocol a lot in a way that makes it scale much better, so when they complete that upgrade it’ll probably run a lot better already.

The part that worries me about scalability in the long term is the push nature of ActivityPub. My server is already getting several POST requests to

/inboxper second already, which makes me wonder how that’s gonna work if big instances have to push content updates to thousands of lemmy instances where most of the data probably isn’t even seen. I was surprised it was a push system and not a pull system, as pull is much easier to scale and cache at the CDN level, and can be fetched on demand for people that only checks lemmy once in a while.I need to start digging into Lemmy’s code and get familiar with the internals, still only a couple days in with my private instance.

The most important one:

The security issue got officially patched! https://join-lemmy.org/news/2023-07-11_-_Lemmy_Release_v0.18.2

The numbers are a little higher than you mention (currently ~3.2k active users). The server isn’t very powerful either, it’s now running on a dedicated server with 6 cores/12 threads and 32 gb ram. Other public instances are using larger servers, such as lemmy.world running on a AMD EPYC 7502P 32 Cores “Rome” CPU and 128GB RAM or sh.itjust.works running on 24 cores and 64GB of RAM. Without running one of these larger instances, I cannot tell what the bottleneck is.

The issues I’ve heard with federation are currently how ActivityPub is implemented, and possibly the fact all upvotes are federated individually. This means every upvote causes a federation queue to be built, and with a ton of users this would pile up fast. Multiply this by all the instances an instance is connected to and you have an exponential increase in requests. ActivityPub is the same protocol used by other federated servers, including Mastodon which had growing pains but appears to be running large instances smoothly now.

Other than that, websockets seem to be a big issue, but is being resolved in 0.18. It also appears every connected user gets all the information being federated, which is the cause for the spam of posts being prepended to the top of the feed. I wouldn’t be surprised if people are already botting content scrapers/posters as well, which might cause a flood of new content which has to get federated which causes queues to back up; this is mostly speculation though.

As it goes with development, generally you focus on feature sets first. Optimization comes once you reach a point a code-freeze makes sense, then you can work on speeding things up without new features breaking stuff. This might be an issue for new users temporarily, but this project wasn’t expecting a sudden increase in demand. This is a great way to show where inefficiencies are and improve performance is though. I have no doubt these will be resolved in a timely manner.

My personal node seems to use minimal resources, not having even registered compared to my other services. Looking at the process manager the postgres/lemmy backend/frontend use ~250MB of RAM.

For now, staying off lemmy.ml and moving communities to other instances is probably best. The use case of large instances anywhere near the scale of reddit wasn’t the goal of the project until reddit users sought alternatives. We can’t expect to show up here and demand it work how we want without a little patience and contributing.

Noch wurde 0.18.1 nicht veröffentlicht. Auch lemmy.world nutzt nur einen release candidate. Sobald hier 0.18.1 oben steht wird noch am gleichen Tag in der Nacht das Update durchgeführt.

Ich weiß, dass es frustrierend sein kann, aber wir warten auch jeden Tag darauf, dass es endlich da ist.

Lemmy.world and some others have held back on the 0.18.0 back end update because the admin did not want to forego this Captcha integration, which broke in 0.18.0. Version 0.18.1 is expected to release soon, and address the regression with Captcha.

The new version also reduces its reliance on websockete, which should address a number of other quality-of-life problems on this instance, such as the random post bug (and hopefully the 404s/JSON errors I’ve been getting all afternoon).

Hopefully just a few more days and we’ll be back to rights.

I tried to reproduce the exploit on my own instance and it appears that the official Docker for 0.18.1 is not vulnerable to it.

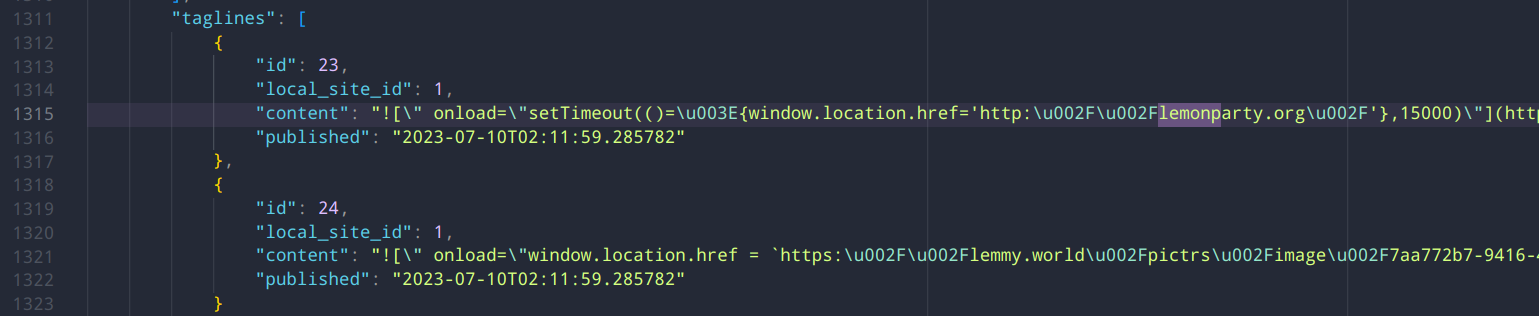

It appears that the malicious code was injected as an

onloadproperty in the markdown for taglines. I tried to reproduce in taglines, instance info, in a post with no luck: it always gets escaped properly in the<img alt="exploit here">property as HTML entity.lemmy.world appears to be running a git commit that is not public.

Query speed is Lemmy’s main performance bottleneck, so we really appreciate any help database experts can provide.

I have been pleading that Lemmy server operators install pg_stat_statements extension and share metrics from PostgreSQL. https://lemmy.ml/post/1361757 - a restart of PostgreSQL server is required for the extension to be installed. I suggest this be part of 0.18 upgrade. Thank you.

Hey all,

As others mentioned we did not have custom emojis so we were not affected by this particular attack. I have since upgraded our UI to 0.18.2-rc.1 which mitigates this XSS vulnerability.

the version number is 0.18.4 that should give you a hint.

it’s entirely possible that these simply haven’t been optimized yet.

Speaking as someone who is on an instance (lemmy.today) that ran into a bunch of breakage from the 0.19.X releases, and which still isn’t fully resolved, and where the instance admin said that he wished that he could downgrade to 0.18.X but couldn’t due to schema changes, I strongly endorse a conservative approach. The releases have not really met the bar that one might want for stability.

That’s especially true for lemmy.world, since it hosts a large chunk of the Fediverse communities, and if it has serious problems, there are gonna be spillover effects even on users elsewhere. I’d wait until less-critical instances have been the guinea pig for a bit on releases.

I’m surprised they didn’t mention a key tipping point I’ve been following: melting permafrost.

It’s dangerous because once all the permafrost in Alaska, Canada, and Russia starts melting, the gasses it releases are a self fulfilling prophecy. The warming caused by permafrost melt is enough to keep melting permafrost.

Good report on the dangers here:

https://news.un.org/en/story/2022/01/1110722

Current estimates are that an increase of just 1.5°C in global temperatures over average would be enough to hit this tipping point and we’re already at +0.8.

https://worldbusiness.org/permafrost-the-climates-tipping-time-bomb/

Continuing to increase at a rate of 0.08°C per decade means we’ll hit this in approximately 80 or 90 years? Except that, since 1980, we’ve been warming at a rate of +0.18°C per decade, so we should hit the tipping point by 2062, tops.

Eh, what do I care, I’ll be 93 years old, assuming I’m not already dead by then. :)

https://www.climate.gov/news-features/understanding-climate/climate-change-global-temperature

The hot algorithm was actually broken, but lemmy 0.18.0 came out today and instances are starting to update - once your instance updates, install jerboa 0.35 n you’ll notice the difference

Lemmy.world was already running a version with this security patch but indeed that’s also in 0.18.2!

Good work upgrading! I can’t imagine it being too easy with a big instance.

I had issues with comments not federating to my own instance before this update (showing 0 for hours). Opening up this up now showed most of them right away if not all. Hopefully that means 0.18.1 fixed a fair few issues people had with federation.

I moved to KBin for a time when Lemmy had various issues such as auto-updating timelines that were hard to deal with and hugely broken algorithms for “Hot” posts, etc.

Somewhere around release 0.18.3 a lot of these issues were fixed and I ditched KBin. I figured in the long term, it was likely that Lemmy would have more development attention. It also used more straightforward terms like “communities” instead what KBin terms them (“magazines”), which just seemed to be unnecessary and confusing terminology for the sake of being different rather than because it made sense.

The KBin interface looks polished, but it hides a lot of fundamental issues with the software under the hood. I hope the project receives more dev attention and thrives in the long-term, however. It’s good for the Fediverse that choices exist.

I kinda miss Reddit, but after browsing it today, it felt kinda weird. Lemmy is starting to feel more and more like home as more people join in and participate. And also the fact that the 0.18 update fixed the numerous issues, it really helps.

Hot is broken atm. There’s an edge case that can happen that stops the hot_rank value decreasing, meaning it never leaves the first page.

Gonna be fixed in 0.18, but yeah, use New until then.

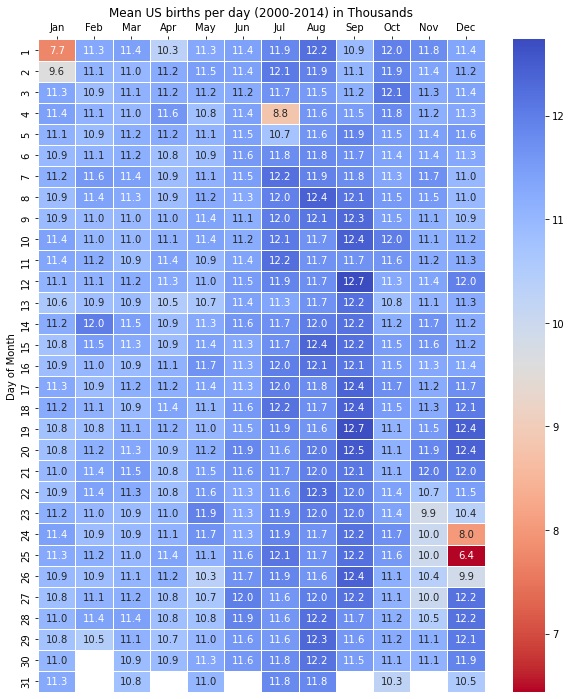

The color scale is terrible. Here is a more credible chart based on presumably the same data by Social Security Administration, covering 62,187,024 US births (2000-2014).

Meanwhile, the post’s chart’s actual Reddit OOP is u/plotset, an account made to shill PlotSet.com, a data visualization software.

They had this to say about the data:This data represents 4,153,303 US-born babies only between 2000 and 2014.

Top 10 Most Common: Sep 12 (0.307%) Sep 19 (0.306%), Sep 20 (0.302%), Dec 19 (0.300%), Sep 10 (0.300%), Dec 20 (0.299%),Sep 18 (0.299%), Aug 8 (0.299%), Sep 26 (0.299%), Sep 17 (0.298%)

Top 10 Least Common: Dec 25 (0.155%), Jan 1 (0.186%), Dec 24 (0.193%), Jul 4 (0.212%), Jan 2 (0.231%), Dec 26 (0.238%), Nov 23 (0.238%), Nov 25 (0.240%), Nov 27 (0.241%), Nov 24 (0.241%)

Data Source: Kaggle.com/datasets/ayessa/birthday

Tools: PlotSet.com

Note that the “4,153,303” figure is bullshit. It is close to births per year but does not actually correspond to the sum in any of the 15 years, nor the average.

Also, neither chart normalizes by weekday: 3 of the years in question started on Tuesday and Saturday while only 1 on Friday, causing most of the variation that got amplified by OOP’s terrible color range. (Because of leap years, I made a table of most common starting weekdays for each month; see my other comment. For example, one of the most common birthdays, August 15, was more often Wednesday or Friday than Saturday.) Without doing weird math, one can ensure the effect of weekdays is largely mitigated by using data from 28 consecutive years, which I believe can be pieced together from several good online sources but I’ll be leaving that as an exercise to the reader.

I’m gonna be asking hard questions, I think, sorry about that. I hope you consider it tough love considering our past interactions.

As an instance admin, I have some questions:

How are you doing? I know there was a lot of pressure when things blew up and it seems to be calming down a bit now.

How is Lemmy doing financially?

Considering past releases and their associated breaking bugs (including 0.18.3), what measures are you taking to help prevent that?

Can we consider the possibility of downgrades being supported?

Why are bugs affecting moderation not release blockers? Does anything block releases?

Are there plans to give instance administrators a voice in shaping the future of Lemmy’s development?

As someone who is trying to help with Lemmy’s development, I have some other questions: