I run an old desktop mainboard as my homelab server. It runs Ubuntu smoothly at loads between 0.2 and 3 (whatever unit that is).

Problem:

Occasionally, the CPU load skyrockets above 400 (yes really), making the machine totally unresponsive. The only solution is the reset button.

Solution:

- I haven’t found what the cause might be, but I think that a reboot every few days would prevent it from ever happening. That could be done easily with a crontab line.

- alternatively, I would like to have some dead-simple script running in the background that simply looks at the CPU load and executes a reboot when the load climbs over a given threshold.

–> How could such a cpu-load-triggered reboot be implemented?

edit: I asked ChatGPT to help me create a script that is started by crontab every X minutes. The script has a kill-threshold that does a kill-9 on the top process, and a higher reboot-threshold that … reboots the machine. before doing either, or none of these, it will write a log line. I hope this will keep my system running, and I will review the log file to see how it fares. Or, it might inexplicable break my system. Fun!

Just as a side note, the load factor can also mean that processes are limited by IO:

Unix systems traditionally just counted processes waiting for the CPU, but Linux also counts processes waiting for other resources – for example, processes waiting to read from or write to the disk.

I would assume that wouldn’t cause so much contention that the system is unusable, though, right? Unless they’re busy waiting.

Just so you know, the load avg is not actually the CPU load. It’s an index of a bunch of metrics crammed together (network load, disk I/o, CPU avg, etc.). A good rule of thumb is to have your load avg value under the number of cores your CPU has. If your load avg is twice the number of your CPU cores it means that your machine is overloaded by 100%, if it’s equal to your number of cores, your machine is using 100% of its capacity to treat whatever you’re throwing at it.

To answer your question, you can probably run a script that fetches your 5 min load avg and triggers a reboot if it’s higher than a certain value. You can run it on a regular basis with a systemd timer or a cron job.

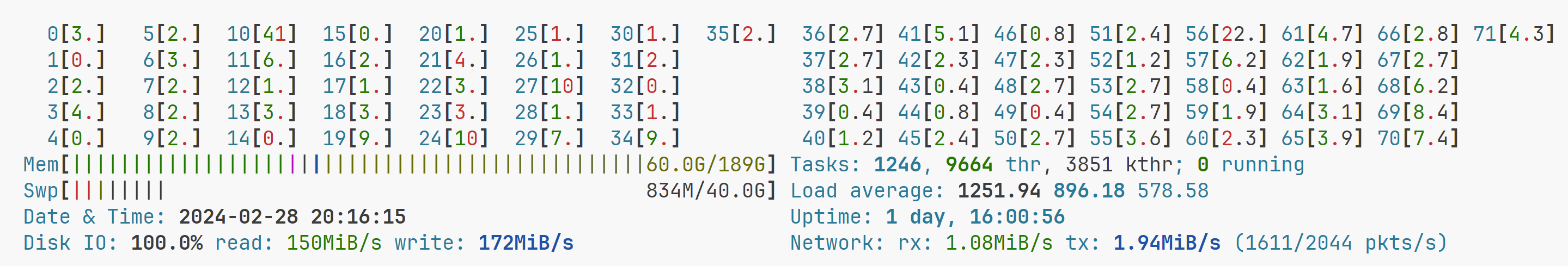

Disk IO can cause rediculous load averages. The highest one I have seen:

My HDDs were sweating that day. Turns out running btrfs defrag once a blue moon is a good idea…

Here’s a better suggestion. Why don’t you see if you can find out what’s causing the issue? It sounds a like a problem occurring in userspace. Try running htop

You know you are right, and I’ve tried. I can manually monitor but it doesn’t happen just then. I don’t know yet what causes it, I can only assume it’s one of the Docker containers because the machine is doing nothing else.

I am doing this to find out how often it happens, how quickly it happens, and what’s at the top when it happens.

I can manually monitor but it doesn’t happen just then

Setup proper monitoring with history. That way yo don’t have to babysit the server, you can just look at the charts after a crash. I usually go with netdata

Maybe try capping the resource usage of each container. At least then the machine won’t completely lock up

That’s a good idea, didn’t know Docker had such capability. I will read up on that - could you give me some keywords to start on?

I very recently spun up a vps and wanted to limit resources; I use docker-compose so this was the info I needed https://docs.docker.com/compose/compose-file/compose-file-v3/#resources

Crontab to just auto reboot daily is probably better - if your PC becomes unresponsive I doubt it would be able to execute another script on top of everything. Ideally though, you’d do some log diving and figure out the cause.

This issue doesn’t happen very often, maybe every few weeks. That’s why I think a nightly reboot is overkill, and weekly might be missing the mark? But you are right in any case: regardless of what the cron says, the machine might never get around to executing it.

Load average of 400???

You could install systat (or similar) and use output from sar to watch for thresholds and reboot if exceeded.

The upside of doing this is you may also be able to narrow down what is going on, exactly, when this happens, since sar records stats for CPU, memory, disk etc. So you can go back after the fact and you might be able to see if it is just a CPU thing or more than that. (Unless the problem happens instantly rather than gradually increasing).

PS: rather than using cron, you could run a script as a daemon that runs sar at 1 sec intervals.

Another thought is some kind of external watchdog. Curl webpage on server, if delay too long power cycle with smart home outlet? Idk. Just throwing crazy ideas out there.

Thank you for these ideas, I will read up on systat+sar and give it a go.

Also smart to have the script always running, sleeping, rather than launching it at intervals.

I know all of this is a poor hack, and I must address the cause - but so far I have no clues what’s causing it. I’m running a bunch of Docker containers so it is very likely one of them painting itself into a corner, but after a reboot there’s nothing to see, so I am now starting with logging the top process. Your ideas might work better.

Have you tried turning your swap off?

Nope, haven’t. It says I have 2 GB of swap on a 16 GB RAM system, and that seems reasonable.

Why would you recommend turning swap off?

To check if your problem is caused by excessive memory usage requiring constant swapping. If it is, turning swap off will make some process be killed instead of slowing the computer down.

The symptoms you describe are exactly what happens to my machine when it runs out of memory and then starts swapping really hard. This is easy to check by seeing if disk io also spikes when it happens, and if memory usage is high

The answer is to create a short script that periodically queries the load, makes a decision and then triggers a reboot. Run it with a SystemD service and give it privileges to do the reboot. Useful languages for the script would be bash or python.

It’s a silly way to handle it. You’re probably quicker and better off solving the actual issue. Because it’s not normal having this happen. Have a look at the logs, or install a monitoring software like netdata to get to the root of this. It’s probably some software you installed that is looping, or having a memory leak and then swapping and hogging the IO until OOM kicks in. All of that will show up in the logs. And you’ll see the memory graphs slowly rising in netdata if it’s a leak.

journalctl -b -1shows you messages from the previous boot. (To debug after you’ve pressed reset.) You can use a pastebin service to ask for more help if you can’t make sense of the output.Other solutions: Some server boards have dedicated hardware, a watchdog to detect something similar to that.

You can solder a microcontroller (an ESP32 with wifi) to the reset button and program that to be a watchdog.

Edit: But in my experience it’s most of the times a similar amount of effort to either delve down and solve the underlying problem entirely and at once. Or writing scripts around it and putting a band-aid on it. But with that the issue is still there, and you’re bound to spend additional time with it once side-effects and quirks become obvious.

If your board support it, use watchdog.

You could disable most of the services running, reintroduce one, see how it performs. Once satisfied reintroduce another, so on and so forth until you’ve fingered out what is at issue.

Yes, but given the fact that there can we weeks between incidents, that is going go be a long time to be without my services.

Could you use an alternative machine as a temporary machine until you get it resolved?

And do you actually need all of them running 24/7 or are at least some of them nice to haves?

Run SMART short tests on your drives. Any “pending sectors” at all are failure.

If the test has any problems, especially pending sectors, replace the drive.