- cross-posted to:

- technology@lemmy.zip

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.zip

- technology@lemmy.world

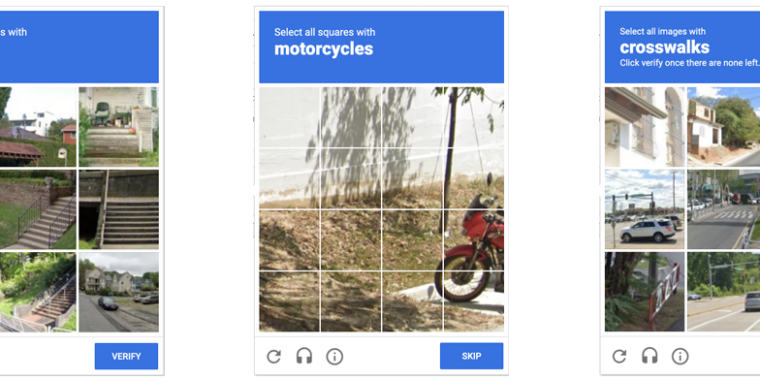

Anyone who has been surfing the web for a while is probably used to clicking through a CAPTCHA grid of street images, identifying everyday objects to prove that they’re a human and not an automated bot. Now, though, new research claims that locally run bots using specially trained image-recognition models can match human-level performance in this style of CAPTCHA, achieving a 100 percent success rate despite being decidedly not human.

ETH Zurich PhD student Andreas Plesner and his colleagues’ new research, available as a pre-print paper, focuses on Google’s ReCAPTCHA v2, which challenges users to identify which street images in a grid contain items like bicycles, crosswalks, mountains, stairs, or traffic lights. Google began phasing that system out years ago in favor of an “invisible” reCAPTCHA v3 that analyzes user interactions rather than offering an explicit challenge.

Despite this, the older reCAPTCHA v2 is still used by millions of websites. And even sites that use the updated reCAPTCHA v3 will sometimes use reCAPTCHA v2 as a fallback when the updated system gives a user a low “human” confidence rating.

If you’re using a personal api from google, is that a way that google can track you? Part of using a VPN, noscript and adblock for me is to prevent that kind of tracking.

Nothing is truly free with Google. So ya, most likely they are tracking. If you dont want to use Google, there are other options on their wiki

https://github.com/dessant/buster/wiki

If not, you can use a dummy account just for this.