The tool, called Nightshade, messes up training data in ways that could cause serious damage to image-generating AI models. Is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission.

ARTICLE - Technology Review

ARTICLE - Mashable

ARTICLE - Gizmodo

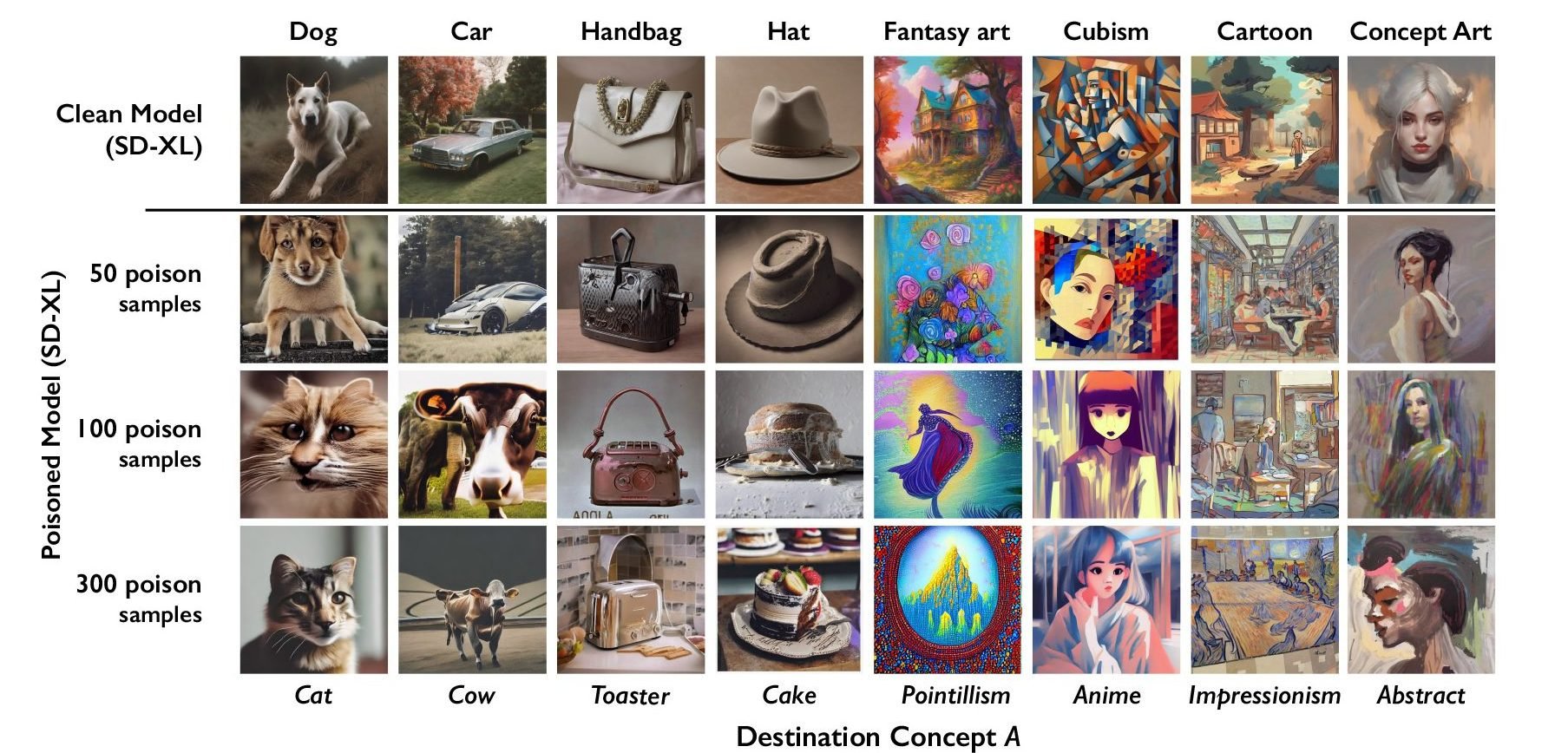

The researchers tested the attack on Stable Diffusion’s latest models and on an AI model they trained themselves from scratch. When they fed Stable Diffusion just 50 poisoned images of dogs and then prompted it to create images of dogs itself, the output started looking weird—creatures with too many limbs and cartoonish faces. With 300 poisoned samples, an attacker can manipulate Stable Diffusion to generate images of dogs to look like cats.

The Luddites weren’t inherently anti technology. They specifically did not break technologies that were not being used by elite capitalists to exploit them and diminish the value of their labor.

You obviously haven’t taken the time to study the history of the Luddites and therefore fail to see why backlashes against exploitative uses of technologies are needed.

I have made art in oil, gouache watercolor, charcoal, and other physical mediums, as well as Photoshop, Illustrator, and 4 color screen prints. I’ve made classical, roccoco, formalist, and abstract art as well as even anime.

Ive coded in JavaScript, Python, Bash and C and continue to use plenty of tech and learn more about it every fucking day. And yeah, I’ve used AI to help make shitty images and occassionally code simple scripts.

I’ll not go into the whole moral problems of OpenAI exploiting Kenyan workers by trauamatizing them with horrific content to train their LLM.

Honestly, the piece of shit NFT apes were a better example of art than what AI is currently making, and the hype around AI right now is so similar it makes me laugh.

The worst artists and also coders I’ve met claim there’s no new ideas in either domain, just different mediums/languages to express them in. The problem with AI generated code and art is that it is GUARANTEED to not make anything new.

There’s a supreme cynicism in the way elite techno evangelist corporate assholes have basically taken all the data of the past 30+ years web scraped from all over the public internet and said that’s enough to mimic the skills , talents, and knowledge of all of humanity. Oh, and apparently it’s better than human works because we can just pay the human once, pay no royalties, scan their art, their faces, their texts, their voices, and just say fuck em cuz why the fuck would we care about continuing to support the amount of work that went into developing those talents when I can just reap the end results?

AI can’t exist in a vacuum, it needs more data to stay relevant, and if enough people starve it, corporations will have no choice but to meet the workers on their terms or simply close up shop, take their millions, and hope people don’t stumble on their version of Galt’s Gulch, cuz if they do, it’ll be mighty fine eating for the poor.

But hey yeah, let’s just blindly follow the Elon Musks, Jeff Bezos, Bill Gates, Mark Zuckerbergs, Tim Cooks, and Sundar Pichais of the world and not ever question their business practices or regulate their monopolies or speculate on whether AI or VR or AR or whatever reality they want to insist is an “inevitable” future so much so that it is the lie that becomes truth solely because they had the power, influence, and money to make it so.

Personally I’d rather see the majority of people weigh in on what THEY want tech to do for them, and not have tech evangelists and corporate bootlicker lackeys insist on some ambiguous inevitable tech dystopia being unavoidable. Fuck that.

Cuz if there’s one thing that all these pieces of shit at the top of their tech empires have made abundantly clear to the public. It’s that Tech Won’t Save Us.

That’s a wall of text but I will talk about you Elon Musk, gates, etc comment. The main ones pushing for regulations are specifically these groups.

If it becomes law that you can’t use scrapped material for AI, or all the material is poisoned, it absolutely kills any open source or small endeavor. Openai and company will happily pay for these databases, it means they keep their moat and are easily able to push subscribing services down our throats. The artists still wont get a dime since the dataset will come from instagram, Getty, adobe etc but the consumers will get heavily fucked.