if brute force isn’t working you’re not using enough

This is the best summary I could come up with:

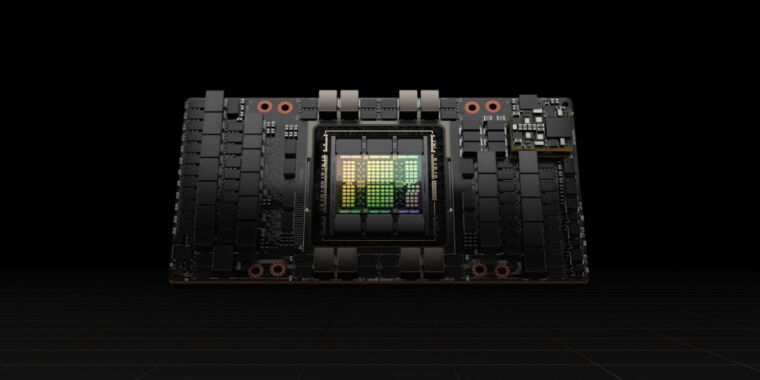

The US acted aggressively last year to limit China’s ability to develop artificial intelligence for military purposes, blocking the sale there of the most advanced US chips used to train AI systems.

China’s leading Internet companies have placed orders for $5 billion worth of chips from Nvidia, whose graphical processing units have become the workhorse for training large AI models.

Besides reflecting demand for improved chips to train the Internet companies’ latest large language models, the rush has also been prompted by worries that the US might tighten its export controls further, making even these limited products unavailable in the future.

The lower transfer rate in China means that users of the chips there face longer training times for their AI systems than Nvidia’s customers elsewhere in the world—an important limitation as the models have grown in size.

That means that Chinese Internet companies that trained their AI models using top-of-the-line chips bought before the US export controls can still expect big improvements by buying the latest semiconductors, he said.

Many Chinese tech companies are still at the stage of pre-training large language models, which burns a lot of performance from individual GPU chips and demands a high degree of data transfer capability.

The original article contains 938 words, the summary contains 203 words. Saved 78%. I’m a bot and I’m open source!

That means that Chinese Internet companies that trained their AI models using top-of-the-line chips bought before the US export controls can still expect big improvements by buying the latest semiconductors, he said.

This hints that there might be a way to ‘un-hobble’ these.

Yeah I think the Chinese know a bit about semiconductors.

Theyre playing catchup when it comes to specifically fabs (iirc they have 28nm working, 20nm this year) which puts them at 2014 fab process wise. Its pretty significant, as starting up a fab is not easy, especially when the U.S, Netherlands, and Japan all want to prevent a competitor.

In terms of gpus, Moore Threads (a newish company spawned from an ex nvidia of China head) has a “functional” gpu on 12 nm (works in some things, but for the most part, requires a shit ton of driver work) which 12nm would be 2017/2018 level hardware, but the driver feels like decades behind.

As for CPUs, china has a joint venture with AMD, so china can use basic levels of ryzen tech for its own cpus x86 wise, else they have their own internal risc-v and arm based designs that do fine.

NVDIA: :hobbles semi conductors:

China: “Oh no!; Anyways”