Surprised pikachu face

So what exactly is open about their ai

It’s called OpenAI because they are open to stealing content to train their AI

“I made this!”

You made this?

…I made this!

Except in the AI version it has the wrong number of fingers, and the text is spelled wrong.

I͖ͭ̍̀̏͂̋̏͛ ͕͚̱̗̭͗͗͑ͥͨ̆ͥ͊ͅm̤̻͕̪̥͓͍̿̑̚a̜̝͖̯̰̦͐ͭ̄͋c̠̹̱̱̖ͦ̿̋͗̎l̝̭͚͇̎ͧe͙͕͂͆ ̳̩̦͙̯̮̙t̘̯̯ͣͧ̍ȉ̩̜́̏̂ͯ̉͑̄s̪͖̎ͬ̐͆

I just used ChatGPT to copy this, so I’m sorry fellas, but actually now I made this.

Can’t argue with objective truth

shit, bro. Deep

“Don’t tell me what to do, bro!”

Open(your fucking wallet)AI

It’s criminal they’re keeping the name OpenAI

They put open in their name to get good talent, investments and so people would have a soft spot for them when they collect tons of data to build their product.

Their internal chats that were released in musk lawsuit reveals they knew they were gonna switch to for profit model (they here means the top brass). But they still lied to everybody about their intentions.

reminder, there are localy ran LLMs. Right now is a vital time for open source to fight against closed source in the AI arms race.

Another good resource to help people find models https://llm.extractum.io

Or just straight up install https://ollama.com

I like Ollama, and recommend it to tinker, but I admit this “LLM Explorer” is quite neat thanks to sections like “LLMs Fit 16GB VRAM”

Ollama just works but it doesn’t help to pick which model best fits your needs.

pick which model best fits your needs.

What is the need I have to put the effort in to install all this locally. Websites win in terms of convenience.

I want to work on my stuff in peace and in private without worrying about a company grabbing my stuff and using it for themselves and to give/sell it to other outfits, including the government. “If you have nothing to hide…” is bullshit and needs to die.

Good point. Everything you feed into chatgpt is stored for future reference.

I don’t think I understand your point, are you saying there is no benefit in running locally and that Websites or APIs are more convenient?

I already have stable diffusion on a local machine. I was trying to find motivation to install a LLM locally. You answered my question in a different response

use cases where customization helps while quality does matter much due to scale, i.e spam, then LLMs and related tools are amazing.

At the same time, the trouble with local LLMs is that they’re very resource heavy. Your average household computer isn’t going to be able to run one with much usability or speed.

Which, you know, is fine. Maybe if people had an idea of how much power is required to run them, they would think twice before using a gigawatt to output a poem about farts, and perhaps even wonder how OpenAI can offer that for free. Btw, a 7b model should run ok on any PC with at least 16GB of RAM and a modern processor/GPU.

Phi 3 can run on pretty low specs (requires 4gb RAM) and has relatively good output

it’s a lot slower that chatgpt but on my integrated graphics i7 laptop it ran decent, def enough to be useable. Also there’s different models to play around with, some are faster but worse and some are smarter but slower

Almost like Sam Altman is just another run of the mill tech bro scam guy.

I don’t think he is a “tech bro scam guy”, i think he is worse like he is smart and has a documented track record of lying. Unlike other tech bros, he actually knows the capability /limits of his products and he still lies and makes it out to be something it’s not.

I hope OpenAI is going to serve as a radicalizing example to all the engineers, who fell for the “ethical guy/company” rhetoric, that the minority-controlled corporate structures they’re used to cannot withstand the push for profit. I hope this will make more of them choose majority-controlled structures for their startups and demand unions in existing corpos.

I mean, I was already radicalized in that respect, but it’s definitely reaffirming that radicalization.

But also: I fuckin told you so. This progression was so blindingly obvious from the get-go.

OpenAI on that enshittification speedrun any% no-glitch!

Honestly though, they’re skipping right past the “be good to users to get them to lock in” step. They can’t even use the platform capitalism playbook because it costs too much to run AI platforms. Shit is egregiously expensive and doesn’t deliver sufficient return to justify the cost. At this point I’m ~80% certain that AI is going to be a dead tech fad by the end of this decade because the economics just don’t work now that the free money era has ended.

It will fall through much faster than that. I’m thinking two years, tops.

If your username is any prediction then it will be consumed by Lemmy… 🎶downtown🎶

The fact that Silicon Valley interests effortlessly shrugged off the non-profit board’s attempt to hit the kill switch last year, and now are preparing to take the company commercial despite the deliberate design otherwise, becomes much more interesting when you consider the theory that corporations are a form of artificial superintelligence.

If the AI idealists can’t stand up to basic forces of capitalism, how do they expect to control an actually dangerous AGI?

If the AI idealists can’t stand up to basic forces of capitalism, how do they expect to control an actually dangerous AGI?

My guess is they don’t expect to. I guess that that is one of the reasons they seem to not care about out of control climate change; burn it all down before it all literally burns down.

Yeah, the people leading the “AGI will save us” are the same as super church pastors.

They don’t believe it, they just want their bank account limitless before they go into oblivion.

I kinda liked the text you linked. Here’s a quote.

There are also structural changes that can be made to corporations to realign their values system with human welfare. Corporate charters can be amended to optimize for a triple bottom line of social, environmental, and financial outcomes (the so-called “triple Ps” of people, planet, and profit.)

This reminds me of what we are trying to do where I live. The hard thing is this requires a lot of work and it doesn’t just go against the corporate agenda; it goes against the normal lifestyle most everyone around us lives. It has made me want to quit sometimes.

But then again, true life is in true living among real people and real things, not in daydreaming of better days.

Probably has to be renamed to “ClosedAI” then.

“ClosedAI”

Step 1. Make an AI that hoovers up content.

Step 2. When owners of content complain about privacy violations and copyright infringement, allay their fears. This AI is for the Good of Humanity.

Step 3. ???

Step 4. Profit.

Yet another example of doing crime at a big enough scale that you get rewarded for it. That’s what this country was built on.

… To the surprise of <checks notes> absolutely nobody

Actually I have a question and I admit knowing nothing of the legal framework here but…

Isn’t it absolutely ridiculous that a not-for-profit entity can exists solely for the purpose of developing a closed-source piece of software, demand to train it for free off copyrighted material, just to switch to a for-profit entity??

Sound 100% like tax avoidance. Like me registering a charity so I can throw a mega concert/party privately, secure preferencial treatment on supplies, get discounts on artists or even free performance and then switch to for profit as I start selling tickets

Originally all their work was supposed to be published and shared with the world, hence the “open” in OpenAI. However somewhere along the way they made a for-profit break off of the original company and started pulling everything in that direction.

OpenAI: It’s not fair to charge us to use copywriten works.

Also OpenAI: Also you have to pay us for using them.

That’s why all human creative works done online need to be bean related. To fuck up the data stream and make it unintelligible for AIs and marketing algorithms.

How exactly does one “outgrow” “AGI for the benefit of all humanity?

OpenAI Charter https://openai.com/charter

Our primary fiduciary duty is to humanity. We anticipate needing to marshal substantial resources to fulfill our mission, but will always diligently act to minimize conflicts of interest among our employees and stakeholders that could compromise broad benefit.

Great read

As Ed said, Sam Altman has been a plague.

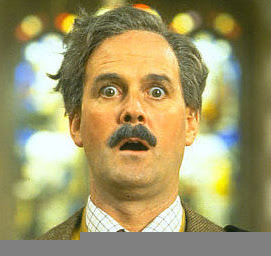

Ah, the John Cleesachu.

Shocked Cleesachu

My favorite part of this image is the image corruption on the bottom lol. Hopefully that wasn’t a local my side issue or I’m gonna look insane

Somewhere along the way my copy got janked. I liked it so I keep using it.

Just want to point out that it absolutely is possible to train an AI that will keep track of its sources for inspiration and can attribute those when it makes a response.

Meaning creators could be compensated for their parts of AI generated stuff, if anyone wanted to.

Doesn’t Phind do this already? I haven’t used it much but I remember it showing its sources for answers of code-related stuff

I use Phind solving computer problems. It does cite the sources it uses. At least for distro and general Linux issues. So far, it’s been a very good resource when I’ve needed it.

Other than citing the entire training data set, how would this be possible?

The entire training set isn’t used in each permutation. Your keywords are building the samples based on metadata tags tied back to the original images.

If you ask for “Iron Man in a cowboy hat”, the toolset will reach for some catalog of Iron Man images and some catalog of cowboy hat images and some catalog of person-in-cowboy-hat images, when looking for a basis of comparison as it renders the image.

These would be the images attributed to the output.

Do you have a source for this? This sounds like fine-tuning a model, which doesn’t prevent data from the original training set from influencing the output. The method you described would only work if the AI is trained from scratch on only images of iron man and cowboy hats. And I don’t think that’s how any of these models work.

I think that there are some people working on this, and a few groups that have claimed to do it, but I’m not aware of any that actually meet the description you gave. Can you cite a paper or give a link of some sort?

Wild for a company that’s never made a profit

These companies do not make profit in paper but have already made millions for others.

It’s all smoke and mirrors

Oh it’s made plenty for Nvidia.