there is… a lot going on here–and it’s part of a broader trend which is probably not for the better and speaks to some deeper-seated issues we currently have in society. a choice moment from the article here on another influencer doing a similar thing earlier this year, and how that went:

Siragusa isn’t the first influencer to create a voice-prompted AI chatbot using her likeness. The first would be Caryn Marjorie, a 23-year-old Snapchat creator, who has more than 1.8 million followers. CarynAI is trained on a combination of OpenAI’s ChatGPT-4 and some 2,000 hours of her now-deleted YouTube content, according to Fortune. On May 11, when CarynAI launched, Marjorie tweeted that the app would “cure loneliness.” She’d also told Fortune that the AI chatbot was not meant to make sexual advances. But on the day of its release, users commented on the AI’s tendency to bring up sexually explicit content. “The AI was not programmed to do this and has seemed to go rogue,” Marjorie told Insider, adding that her team was “working around the clock to prevent this from happening again.”

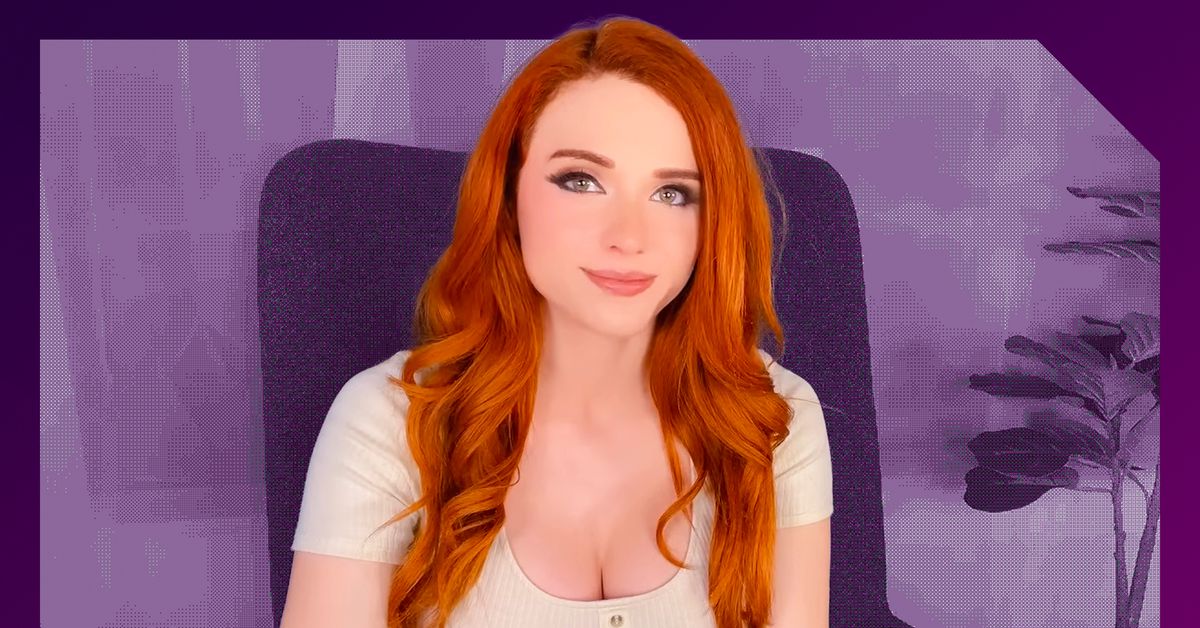

I don’t agree with Amouranth’s statement of “I don’t think it’s harmful for people to form a social aspect with it. As long as we’re aware that it’s not a real person.” You can tell people it’s not real all you want, but when you model it off your voice/likeness, you’re inherently attaching yourself to it. I’m sure the majority of people will understand it’s an AI modeled off her voice, but there will always be some that get more attached to it than they should. Just saying “I don’t think it’s harmful because people should know it’s not real” doesn’t magically solve the issue and cause everyone to behave.

Replika is another smutty type AI, but it’s clearly designated as such, and the avatar is pretty generic looking, which is more of what I’d want to see, at least for now. It being text-only also helps keep that separation. Who knows, it could end up fine, but this is closer to diving in the deep end before people have waded in to see what the water is like.

Exactly. Celebrities already have a problem with people forming unhealthy parasocial relationships with them when the interactions are unidirectional, having something answering back is going to make it much, much, worse. Hopefully no one is going to be seriously hurt in the aftermath of this money-grubbing stunt.

Yeah, and people got extremely attached to their Replikas. To the point that the subreddit stickied a suicide hotline number when the app removed sexual roleplay.

The slippery slope of “I know it’s not real but we’re so close and it’s based off of you so therefore you should love me too” does not feel like that much of a stretch